The AI hype is loud. The results are quieter.

AI is in every conference talk, every vendor pitch, every WhatsApp group of clinic owners. Walk to any aesthetic medicine event this year, and you’ll lose count of the booths promising to transform your consultations, your follow-ups, your everything.

But strip away the noise, and there’s a harder question worth sitting with. Which clinics are actually getting value from AI, and why aren’t most? After years of running a cosmetic clinic and building software for hundreds of others, the pattern is clear to me. AI on its own doesn’t create value. The foundations underneath it do.

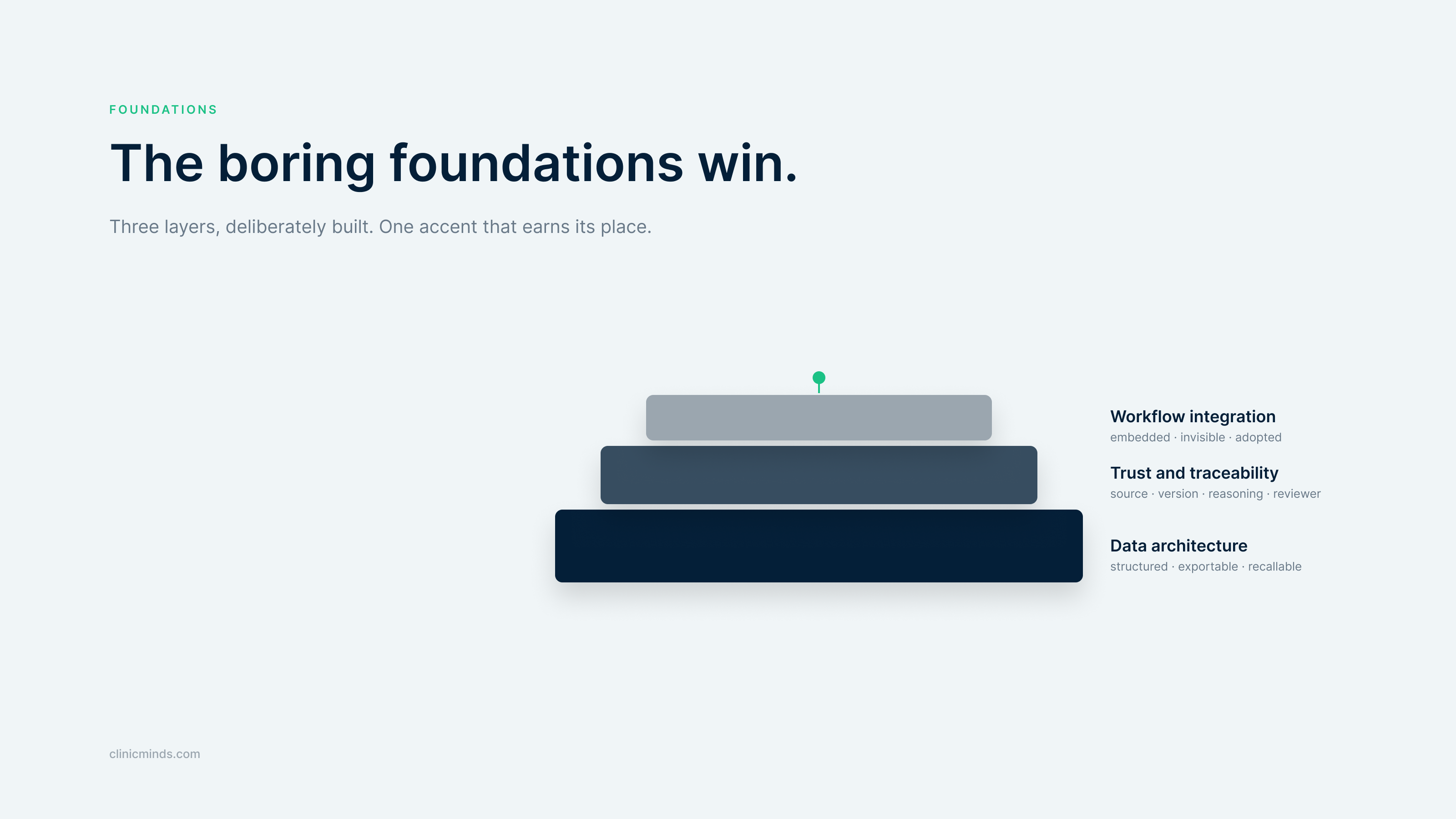

The AI layer only delivers value when the three foundations underneath it are deliberately built.

The demo always works. The deployment often doesn’t. (I’ve written before about why thorough consultation recording is the backbone of every successful aesthetic clinic, which is the same principle from the other direction.)”

Most AI tools shown at aesthetic conferences look brilliant in a five-minute slot. Six weeks after the pilot starts, half of them are quietly switched off. Nobody talks about that part.

Every clinic owner I know has at least one story of a tool that looked transformative in the demo and was abandoned within two months. The reasons are rarely technical. The AI scribe that promises to save you twenty minutes per consultation only delivers if the notes land in the right patient record, in the right format, ready for follow-up and audit. If they land anywhere else, the practitioner cleans up afterward, and the time saving disappears. Within weeks, the team stops using it.

So what needs to be true underneath the AI for it to actually deliver?

Foundation 1: Your data architecture

This sounds abstract. It isn’t. Data architecture is the answer to questions you’ll one day be asked under pressure.

What did you actually inject in this patient three years ago, which batch, in which site, with whose consent? Can you produce that record in under a minute? Are you able to export it cleanly if you move systems? Is it possible to run a recall on every patient who received a specific product batch?

If those questions make you uncomfortable, the issue isn’t your AI strategy. It’s the layer below it. In clinics, weak data foundations show up as failed audits, painful migrations, and records that can’t be reconstructed when it matters. Bolting AI onto messy data doesn’t fix the mess. It accelerates it.

Foundation 2: Trust and traceability

Trust is the product in healthcare. In aesthetic medicine, where patients pay privately and scrutinize every choice they make about their face, it’s the entire competitive advantage.

When AI touches patient records, four things need to be traceable: where the data came from, which version of the AI generated the output, why it produced that particular suggestion, and who reviewed it before it became part of the record. This isn’t bureaucracy. It’s what lets you sleep at night when a patient, insurer, or inspector asks the hard question.

Foundation 3: Workflow integration

This is the one I see clinics get wrong most often.

Picture a practitioner finishing a forty-five-minute filler consultation. The next patient is in the waiting room. If the AI summary lives in a separate tab that requires login, copy, paste, edit, and save, the tool adds work. The practitioner uses it twice, gets frustrated, and stops. The subscription gets canceled three months later.

If the summary writes itself directly into the patient record where the next person expects to find it, the practitioner barely notices the tool is there. They get their time back. The record is complete. The AI did its job.

The principle behind this is simple. No clinician opens their clinic in the morning thinking about which software they get to use that day. They think about the patients in the diary. Any tool that respects this and disappears into the background will survive. Anything that demands attention for its own sake gets switched off.

Five questions to ask any vendor selling you AI for aesthetics

Take this list into your next demo.

- Where does the patient data physically live, and what GDPR safeguards are in place? EU data residency is the baseline. Ask which region the servers sit in, whether the vendor has a signed Data Processing Agreement, and how they handle international data transfers. Vendors who can answer this in detail have done the work. Vendors who get vague have not.

- Where does the AI output actually land in the patient record? A single free-text blob is hard to search, report on, or migrate. Output that lands in the right structured fields (history, examination, plan) gives you the readability of narrative and the searchability of structured data.

- Can the AI output be reviewed and edited before it’s saved? Or does it just write into the record?

- Is the AI embedded in your workflow, or a separate product? Separate products lose the adoption battle.

- Who reviews and signs off on the AI output, and can you prove it later? AI scribes should produce drafts, not final records. The clinician stays accountable, but only if there’s a clear review step and an audit trail to back it up.

The order matters: foundations first, AI second

There’s a temptation right now to start with the AI question. Which scribe should we use, which automation tool, which feature? It’s the wrong starting point.

The clinics that get real value from AI almost always build the layer underneath it on purpose. Structured data from day one. A single patient record that everyone works from. Clear ownership of who reviews what before it gets saved. Workflows that were designed, not inherited from whatever system happened to be there first.

When those things are in place, the AI on top does what it promises. When they aren’t, no AI tool can compensate, no matter how good the demo looked.

The boring foundations win

AI isn’t going away, and it shouldn’t. Used well, it gives practitioners time back and patients more attentive care. But the clinics that quietly pull ahead over the next few years won’t be the ones with the loudest AI strategy. They’ll be the ones who got the foundations right first.

Pick one of the five questions above and ask it of whatever software you’re running today. The answer will tell you more about your next year than any demo will.